My new website is here: www.geekgirl.io

Git: Amending last commit

If you accidentally forget to add a file or need to make an additional change to a file when working with git, don’t fret, the solution is simple.

1. Make the change and add it locally with

git add .

2. Commit the change with

git commit --amend

3. You can then verify that this second commit has updated the previous one by running a

git log --stat

Git: Ignoring files

You can ignore files on a project level or have a global ignore file. Git will recursively ignore the specified file patterns.

1. Create a file in a safe location that wont be deleted easily.

$ vi ~/.gitignore_global

2. Add the following commonly ignored files and directories.

# Eclipse #

target/

bin/

bin-debug/

.svn/

html-template/

# Compiled source #

###################

*.com

*.class

*.dll

*.exe

*.o

*.so

# Packages #

############

# it's better to unpack these files and commit the raw source

# git has its own built in compression methods

*.7z

*.dmg

*.gz

*.iso

*.jar

*.rar

*.tar

*.zip

# OS generated files #

######################

.DS_Store

ehthumbs.db

Thumbs.db

Ignoring a directory in git: directoryName/

Ignore a file in git: *.jar or .DS_Store

3. The last step you need to do is associate this file in the git config.

git config --global core.excludesfile ~/.gitignore_global

This will create an entry in your ~/.gitconfig file.

Reference:

Git Ignore Doco

Internet Censorship in Australia

In the spirit of Ada Lovelace Day Ive decided to make this blog post about the proposed internet censorship laws in Australia currently championed by Telecommunications Minister Stephen Conroy. Im very passionate about freedom of speech and freedom of information. My earliest memory of censorship outrage was when I found out satirical novel by Bret Easton Ellis, Americn Psycho, was actually banned in my home state Queensland. This is despite the fact that the Office of Film and Literature Classification Guidelines state that “adults should be able to read, hear and see what they want”.

If you want to read more about some of the more interesting censorship laws in place in Australia then perhaps do some investigation into why activist group Anonymous called their recent protest “Operation Titstorm“.

Classification

The reason why American Psycho is banned in some states is because its received a “R” for Restricted classification by the Australian Communications and Media Authority, ACMA. Similarly for electronic materials ACMA, have created another classification, “RC”, Refused Classification. Any site deemed RC will go on the governments URL blacklist for possible filtering. Stephen Conroy has been marketing his censorship policy as a way of “protecting the children” but websites can be classified as RC if they are not only about child pornography but “depictions of bestiality, material containing excessive violence or sexual violence, detailed instruction in crime, violence or drug use, and/or material that advocates the doing of a terrorist act”.

Recently Conroy approached Google to ask for them to implement search filetering on youtube for anything that falls under the RC classification to which a Google spokeswoman replied Google “won’t comply voluntarily with the broad scope of all RC content”. More here.

I applaud Google for taking a firm stance on this issue, once we start filtering, where does it stop? Isn’t this sounding a little too much like China?

Filtering

In December 2009 the labour government announced it would go ahead with plans to censor internet access after a government comissioned ISP trial found that filtering a blacklist of banned URL’s didn’t slow down web access. So? I don’t care about speed (well I do but it comes much further down the list) what I care about is how this censorship infringes on my right to freedom of information.

From the beginning this policy has been very hazy about what exactly goes on this blacklist and who maintains it.

Furthermore why should ISP’s be shouldering this responsibility anyway, will they be subsidised by the government for increased administration costs?

Conroy himself has recently filtered his own website by removing “ISP Filtering” from his tag cloud. Ahh the hypocrisy of politics.

Where do we currently stand?

At present these laws have not been passed by the senate and as its a sensitive topic it looks likely that it wont be mentioned much in the lead up to the upcoming election.

I will be watching this topic closely to see what all parties have to say about it because this will swing my vote.

What do I think?

Im opposed to ISP filtering and other search filtering because:

- I believe in freedom of information for everyone in every country

- There is no transparency over what goes on this blacklist and who maintains it

- RC is too broad

- The government could potentially add anything to this blacklist under the tenuous guise that its RC, potentially restricting access to content harmful to its own agenda

- Its will be easy to get around for a somewhat technically savvy person, so what’s the point of it?

- I feel patronised that the government is telling me what is and isn’t appropriate for me to view – don’t get me started on the video game classification debate – GRR

Concurrency and Deadlocks in Java: A Brief Introduction

In attempting to understand how concurrency works with java it is important to lay the foundation by discussing process management in operating systems before moving on to specific concurrency issues in java.

Process Management in Operating Systems

A process is an instance of a computer program that performs some action. A process can have multiple threads and can be controlled by a user, other programs or by the operating system that its running on. The operating system will execute processes sequentially and can be running multiple processes at any one time, this is called concurrency.

To further explain, a single computer processor runs instructions one at a time, so that a user can run several programs at once, time-sharing is performed. Time-sharing allows for programs to be in either “executed” or “waiting to be executed” state, this gives the illusion to the user that multiple processes are running at the same time but in reality the operating system is switching between them rapidly. An operating system run on a computer with multiple processors can execute instructions simultaneously for different processes.

Mutexes and Threads

A mutex or mutual exclusion is used in parallel programming to “lock” a shared resources so that only one thread can access it at a time whilst at the same time giving waiting tasks a place to wait for their turn. A popular way to remember this is to think of an airplane bathroom. Only one person can access the bathroom at one time, in this case the lock is the mutex so when the door is locked the bathroom is unavailable to the people waiting in queue.

In java every object has one monitor and mutex associated with it. A monitor is a piece of code which is guarded by a mutex. Whenever a thread accesses a synchronized method, the mutex is locked, conversely when the method is finished, the mutex is unlocked. This ensures that only one synchronized method is called at a time on a given object.

Deadlocks and Prevention

A deadlock occurs when two or more threads are waiting for each other to finish and so therefore cannot.

Lock Ordering

One way to prevent a deadlock is to assign an order to the locks and require that they are accessed in that order. This can only work if you know about all locks ahed of time so is not always practical.

Lock Timeout

You can also prevent deadlocks by having a timeout function on lock attempts. This means a thread will only try for so long to acquire the necessary locks before quitting (backing up), thus freeing all locks taken. It will then try again after a random amount of time during which other threads can try to access the necessary threads.

One of the drawbacks if you have lots of threads trying to acquire the same lock they might end up waiting the same amount of time and therefore trying to obtain a lock at the same time over and over.

Deadlock Detected

What do you do if you have a deadlock? You can release all the locks and wait a random amount of time before retrying. But this is can have the same problems as described by a lock timeout above. A better way to do this would be to assigned a priority to the threads so that only the highest priority thread/s backup.

References

Processes:

- http://computer.howstuffworks.com/operating-system5.htm

- http://en.wikipedia.org/wiki/Process_(computing)

Mutexes:

Deadlocks:

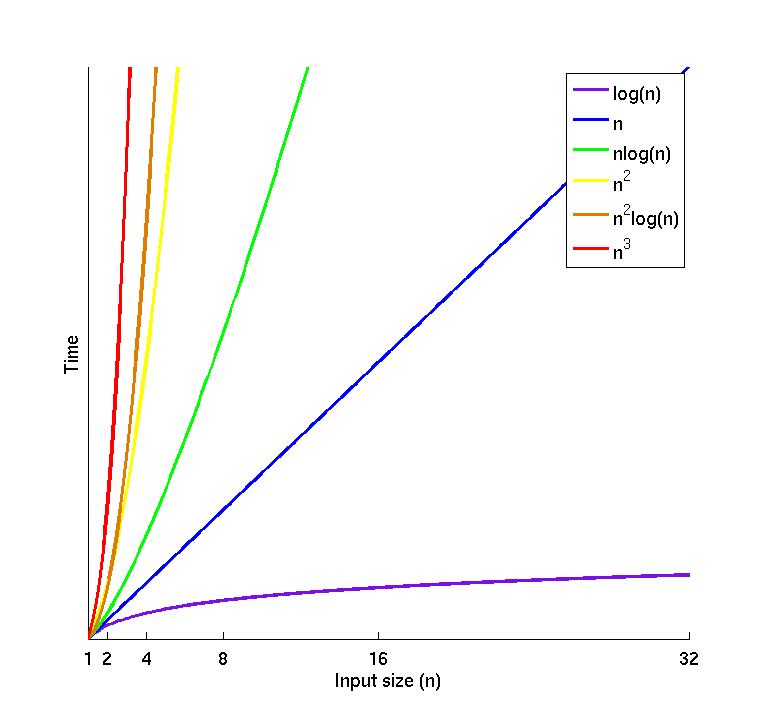

An Intro to Big Oh Notation with Java

Big Oh notation is used in computer science to decribe the complexity of an algorithm in terms of time and space (memory). Big Oh notation describes the worst case scenario of what happens when an algorithm is run with N values and is really only useful when talking about large sets of data.

| constant | logarithmic | linear | quadratic | cubic | ||

|---|---|---|---|---|---|---|

| n | O(1) | O(log N) | O(N) | O(N log N) | O(N2) | O(N3) |

| 1 | 1 | 1 | 1 | 1 | 1 | 1 |

| 2 | 1 | 1 | 2 | 2 | 4 | 8 |

| 4 | 1 | 2 | 4 | 8 | 16 | 64 |

| 8 | 1 | 3 | 8 | 24 | 64 | 512 |

| 16 | 1 | 4 | 16 | 64 | 256 | 4,096 |

| 1,024 | 1 | 10 | 1,024 | 10,240 | 1,048,576 | 1,073,741,824 |

| 1,048,576 | 1 | 20 | 1,048,576 | 20,971,520 | 1012 | 1016 |

Source: table source here also big thanks to Dean who made the graph for me 🙂

Explanation

O(1) = irrespective of changing the size of the input, time stays the same

O(N) = as you increase the size of input, the time taken to complete operations scales linearly with that size

O(log N) = growth curve that peaks at the beginning and slowly flattens out as the size of the data sets increase

O(N2) = growth will double with each additional element in the input

Java Example for O(1):

public static boolean isArrayOver100(String[] args) {

if (args.length > 100)

return true;

return false;

}

Java Example for O(N):

public static boolean contains(String[] args, String value) {

for (int i = 0; i < args.length; i++) {

if (args[i] == value)

return true;

}

return false;

}

Java Example for O(log N):

public static int binarySearch(int[] toSearch, int key) {

int fromIndex = 0;

int toIndex = toSearch.length - 1;

while (fromIndex < toIndex) {

int midIndex = (toIndex - fromIndex / 2) + fromIndex;

int midValue = toSearch[midIndex];

if (key > midValue) {

fromIndex = midIndex++;

} else if (key < midValue) {

toIndex = midIndex - 1;

} else {

return midIndex;

}

}

return -1;

}

See my other post on binary search here.

Java Exmaple O(N2)

public static boolean containsDuplicates(String[] args) {

for (int i = 0; i < args.length; i++) {

for (int j = 0; j < args.length; j++) {

if (i == j)

break;

if (args[i] == args[j])

return true;

}

}

return false;

}

I took this post as my inspiration as I found it to be a great introduction but have instead used java for the code examples.

Implementing Binary Search in Java

What is it?

Binary Search is a technique used to search sorted data sets. It works by comparing the middle element against the search value, if the search value is greater than the middle element a subset is created out of the upper half of the set. The same operation is then performed on the subset recursively until the value is either found and the index returned, or not found and -1 returned.

eg. If we start with with a set of ints: [1, 4, 6, 8, 9, 10, 11, 13, 15] and our search value = 11

- Choose the middle element: 9

- Is key == 9? No so we can’t break early

- Is 9 > 11

- No, so take the upper half and create a subset [10, 11, 13, 15]

- Choose the middle element: 13 (4/2 =2 which is the third element in the array as arrays are zero based)

- Is 13 > 11

- Yes, so take the lower half and create a subset [10, 11]

- Choose the middle element: 11

- Is 11 == 11 yes – we have found our element

Binary Search in Java

I have created my own java binary search method:

public static int binarySearch(int[] toSearch, int key) {

int fromIndex = 0;

int toIndex = toSearch.length - 1;

while (fromIndex <= toIndex) {

int midIndex = (toIndex - fromIndex) / 2 + fromIndex;

int midValue = toSearch[midIndex];

if (key > midValue) {

fromIndex = midIndex + 1;

} else if (key < midValue) {

toIndex = midIndex - 1;

} else {

return midIndex;

}

}

return -1;

}

Take a look at Arrays.binarySearch() method to see the java source code implementation of the search. If you don’t have the java source code attached to your favourite IDE I suggest you do so, it’s a great way to learn how everything works.

Complexity

The complexity of the binary search is O(log n).

Why use Binary Search?

Binary Search is quicker than searching through every element (O(n)) as it halves the amount of elements to search each iteration. This halving means that after a strong growth curve at the beginning, it slowly flattens out as the size of the input data set increases, therefore it is good for large data sets.

More Info

There is a good introductory explanation of binary search and O notation here.

Pair Programming: a few thoughts

I recently read a great article on pair programming by a developer over at Atlassian where he really summed up my views on the topic. I have had really good experiences with pairing and some not so good ones.

What came out of some of the more positive experiences were:

- I came up to speed on a new system quickly

- Risks or major design questions were verbalised and dealt with sooner

- I learnt more efficient ways of coding things

- I learnt more cool eclipse keyboard shortcuts!

- My TDD and general development skills rapidly increased

Some of the more difficult experiences:

- I felt frustrated at the sometimes slow pace

- Sometimes my pair would dominate the keyboard so it was easy to drift off into la la land

- When joining an existing team I worried I wasn’t learning enough of the system and if they were sick that day I’d be lost

Some of the paring experiences Ive had I didn’t realise until much later how extremely valuable they really were.

I think in order for pairing to be successful you should:

- rotate partners often

- be open to constructive criticism on code improvements

- voice your opinion/suggestions so you can work a team to develop a solution

- take breaks every couple of hours to check email/facebook – refresh

- ensure you get equal keyboard time

- keep trying! – pairing wont come naturally to everyone but there are a lot of benefits to come out of it and its a chance to really improve as a developer

If your workplace doesn’t see the value in pairing then as stated in the article, I think the next best thing is to make sure you get an extra set of eyes on every line of code committed, whether its using a code review tool or as I did on a former project, have another developer check over your code before each commit.

Sonar and Hudson

I recently found out about Sonar after finding issues with running Cobertura on multi-module maven projects (see nabble post). Sonar looked like it solved this issue and added a whole stack more insight into the code quality of a project.

I got a Sonar demo up and running fine locally and convinced my team it would be a benefical tool for us to use so the next step for me was to install Sonar on our development box and integration it with our CI server, Hudson.

There seems to be two ways you can achieve Sonar and Hudson integration:

- Use the Sonar Hudson plugin and run Sonar as an ‘after build’ task

- Run mvn sonar:sonar in a within Hudson

I had a lot of issues trying to get the plugin to work so I ended up going with option 2. Below are the steps I took to get it to work.

- Firstly you need to download and install Sonar. You can do this buy executing the run script or by building and deploying the war file into an applicaiton server. I had issues with Glassfish so I just opted for running it from the run script and for now, I just stuck with the default Derby database.

- Starup Sonar and browse to http://hostname:9000 to make sure it’s up and running.

- Edit the maven settings.xml that is located on the same machine as your Hudson server and add in the following

- Now you can create a new job in Hudson and run it with the following maven command:

clean install sonar:sonar

That’s it! You should be able to browse to your sonar page and see your project there with coverage results. I had my job execute ever day at 11pm rather than on SVN commit.

It’s worth pointing out that the above mvn command will run the tests twice, firstly during install and secondly during sonar:sonar. Take a look here for more info. In order to speed this up it might be worth adding in the following paramater at the end of the command:

-Dsonar.dynamicAnalysis=reuseReports

If you want to generate sonar results even if the build fails add this paramater

-Dmaven.test.failure.ignore=true

All together that would be:

clean install sonar:sonar -Dsonar.dynamicAnalysis=reuseReports -Dmaven.test.failure.ignore=true

Add in a new repository under your exising one.

<repository>

<id>sonar</id>

<name>Sonar Repository</name>

<snapshots>

<updatePolicy>daily</updatePolicy>

<checksumPolicy>ignore</checksumPolicy>

</snapshots>

<url>http://hostname:9000/deploy/maven</url>

</repository>

Add in a new sonar profile

<profile>

<id>sonar</id>

<activation>

<activeByDefault>true</activeByDefault>

</activation>

<properties>

<sonar.jdbc.url>

jdbc:derby://localhost:1527/sonar;create=true

</sonar.jdbc.url>

<sonar.jdbc.driver>org.apache.derby.jdbc.ClientDriver</sonar.jdbc.driver>

<sonar.jdbc.username>sonar</sonar.jdbc.username>

<sonar.jdbc.password>sonar</sonar.jdbc.password>

<sonar.host.url>http://hostname:9000</sonar.host.url>

</properties>

</profile>

update your nexus mirror

<mirror>

<id>hsl-repository-mirror</id>

<mirrorOf>*,!sonar</mirrorOf>

<url>http://maven-repository:8181</url>

</mirror>

If you you are getting out of memory exceptions, consider editing the MAVEN_OPTS under Advanced in your job configuration to have the following value:

-Xmx256m -XX:MaxPermSize=256m

Running JavaFX project on Linux thru Hudson

If you want to get your FX project setup as a Job in Hudson. Simply follow the steps below and victory will be yours!

import javafx.stage.Stage;

import javafx.scene.Scene;

import javafx.scene.text.Text;

import javafx.scene.text.Font;

Stage {

title: "Application title"

width: 250

height: 80

scene: Scene {

content: Text {

font : Font {

size : 24

}

x: 10, y: 30

content: "Application content"

}

}

}

Then verify it’s running by executing the javaxc command (much like javac)

<build> <sourceDirectory>src</sourceDirectory> <plugins> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <configuration> <compilerId>javafxc</compilerId> <include>**/*.fx</include> <fork>true</fork> <!-- NOTE: only “fork” mode supported now --> </configuration> <dependencies> <dependency> <groupId>net.sf.m2-javafxc</groupId> <artifactId>plexus-compiler-javafxc</artifactId> <version>0.3</version> </dependency> </dependencies> </plugin> </plugins> </build>

Caused by: java.lang.NullPointerException at java.io.File.<init>(File.java:222) at net.sf.m2javafxc.javafxc.JavafxcCompiler.compile(JavafxcCompiler.java:146) at org.apache.maven.plugin.AbstractCompilerMojo.execute(AbstractCompilerMojo.java:493) ... 32 more